Marketing Data and Infrastructure on AWS

In the previous blog post in this series,

we delved into the intricacies of Marketing Data and Infrastructure on AWS. We explored common terminologies and major strategies for disaster recovery, emphasizing Backup & Restore and Pilot Light mechanisms.

In this segment, we’ll dive deeper into the Marketing Data and Infrastructure on AWS by focusing on two more disaster recovery strategies:

In this blog post, let’s focus on two more disaster recovery strategies:

- Warm Standby: A crucial component of Marketing Data and Infrastructure on AWS.

- Multi-Site and Hot-Site Approach: Another vital aspect of Marketing Data and Infrastructure on AWS.

Cloud disaster recovery strategies (continued from part 1)

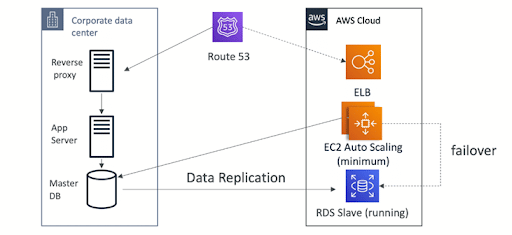

1. Warm Standby

If you use the warm standby strategy for cloud disaster recovery, you must ensure that a scaled-down copy of your production environment is stored in another region and is fully operational. Since your task is constantly active in another region, warm standby extends the pilot light concept and shortens the recovery period. Warm standby also simplifies testing or creating a continuous testing ecosystem, boosting your capability to recover from a data or infrastructure crisis.

AWS Services for Warm Standby strategy

All AWS services covered by backup & restore pilot light, active/passive traffic routing, and infrastructure deployment, including EC2 instances, are also employed in warm standby.Within an AWS region, Amazon EC2 instances, Amazon ECS jobs, Amazon DynamoDB throughput, and Amazon Aurora replicas can all be scaled using AWS Auto Scaling. AWS region resilience is provided through Amazon EC2 Auto Scaling, which scales EC2 instance deployment across availability zones. As part of a pilot light or warm standby strategy, scale out your DR region using auto scaling to reach full production capabilities. With a failover routing strategy and health checks, we can also use Route 53. When a disaster strikes, route 53 will automatically reroute to the alternative (or second) region’s infrastructure if the infrastructure in the first region is offline.

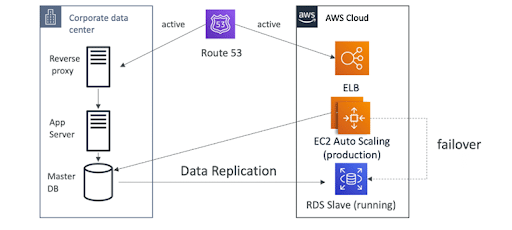

2. Multi-Site or Hot-Site

AWS’s Full Production Scale is operational across numerous regions. With multi-site active/active or hot standby active/passive strategy, you can operate your workload concurrently in various regions. Hot standby only serves traffic from a single region, and other regions are only utilized for disaster recovery. On the other hand, multi-site active/active serves traffic from all regions in which it is deployed. This means that users can access workloads in any of the regions in which it is distributed. In combination with the right technology selection and implementation, a multi-site or hot-site approach for disaster recovery can reduce recovery times to almost zero for the majority of disasters (though data corruption may need to rely on backups, which usually results in a non-zero recovery point). But it is important to note that this disaster recovery approach is the most complex and expensive. . Users are only routed to one region when using hot standby, and DR regions are not used to receive traffic. For the majority of customers, using active/active makes sense if they want a complete setup in the second region.

AWS Services for Multi-Site or Hot-Site Approach

Point-in-time data backup, data replication, active/active traffic routing, infrastructure scaling and deployment, including EC2 instances, are all performed in this strategy using the AWS services covered under backup and restoration, pilot light, and warm standby.

Both Amazon Route 53 and AWS Global Accelerator can be utilized to direct network traffic to the active region in the active/passive scenarios (Pilot Light and Warm Standby). Both of these services allow organizations to establish policies that specify which users travel to which active regional endpoints in the context of the active/active strategy. You can configure a traffic dial with AWS Global Accelerator to manage the percentage of traffic routed to each application endpoint. Amazon Route 53 supports this percentage technique, along with several more other accessible policies like latency- and geo-based ones. Lower request latencies are achieved by Global Accelerator’s automatic use of the vast edge server network to quickly onboard traffic to the AWS network backbone.